#3 Classification: MobileNet V1 on CIFAR100

This page introduces how Nota could compress and optimize the MobileNet V1 model with NetsPresso Model Compressor and Nota’s unique search algorithm.

Preparations

Let's briefly explain the input model and training details used in the example.

1) Model & Training Code

- Data: CIFAR100

- Model: MobileNetV1 (TensorFlow)

Model and training code are in the [NetsPresso Model Compressor ModelZoo] (https://github.com/Nota-NetsPresso/NetsPresso-CompressionToolkit-ModelZoo/tree/main/models/tensorflow).

Training code is required to fine-tune the model after compression.

2) Train Config

The following table describes the configurations for training:

| Parameter | Value |

|---|---|

| Normalization | Mean: [0.4914, 0.4822, 0.4465] SD: [0.2023, 0.1994, 0.2010] |

| Data augmentation | RandomCrop(32, padding=4), RandomHorizontalFlip |

| Learning Rate | 0.1 |

| Optimizer | SGD (momentum: 0.9) |

| Batch size | 128 |

| Epochs | 100 |

| LR Scheduler | ReduceLROnPlateau |

Simple Compression

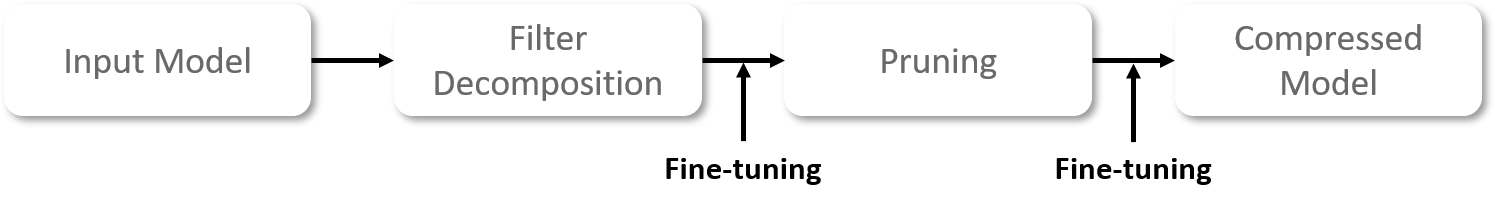

Stage 1: Filter Decomposition

First, we adopted a Filter Decomposition (FD). The aim of FD is to approximate the original filter's representation with fewer filters (ranks).

Since each layer has different amounts of information, finding an optimum rank for each layer is crucial to minimize the accuracy drop.

Please refer to the attached document if you would like to learn more about FD.

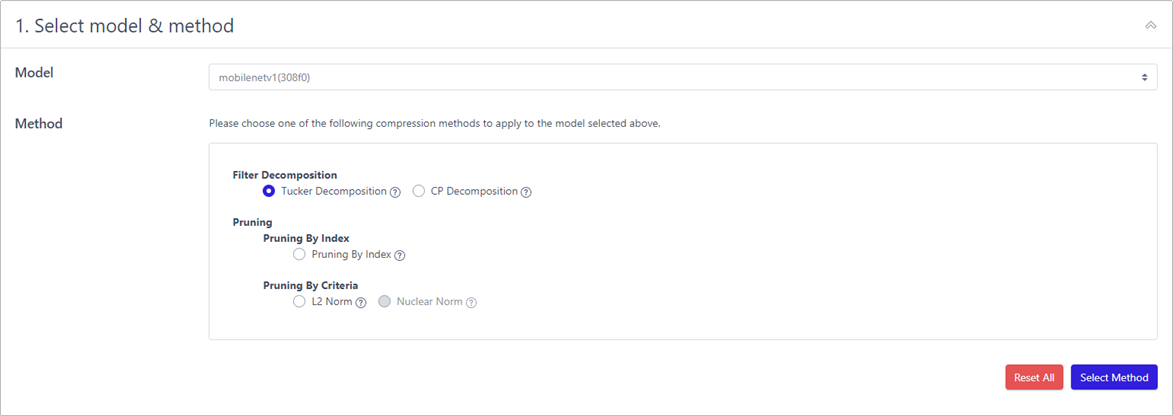

Netspresso Compression Toolkit offers you two options to implement FD:

- Use our recommended values (automatically calculated)

- Use your own values (customized)

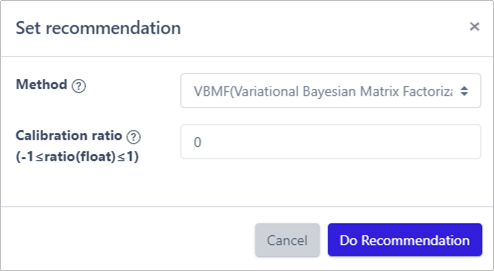

Recommendation function, based on VBMF, presents the optimal rank for each layer in a minute.

1) Tucker Decomposition

Calibration ratio allows a user to easily increase or decrease filter's rank to search for a better performing model. The new rank is calculated by adding (removed rank x calibration ratio) to the remained rank. The ratio is set to 0 as default.

More detailed information can be found at Calibration Ratio Description.

This is what you will see when you click the Recommendation button.

Why there is no recommendation for the first layer?

It is because "In Channel" of the first layer is 3, so there is not much information.

Recommendation function automatically excludes such layers to avoid significant drop in accuracy.

2) Fine-tuning

After the compression, a model culminates in a significant performance deterioration. Thus, fine-tuning is an essential process to recover the original performance.

To fine-tune the model, run the train code we provided NetsPresso Compression Toolkit ModelZoo.

Unlike VGG19, we maintained the same configuration for all parameters but a learning rate; the learning rate was reduced to 1/10 of its original value (from 0.1 to 0.01)

Stage 2: Pruning

Now, we prune a model to further lighten the model. The goal is to reduce the computational resources and accelerate the model by removing less important and redundant filters.

Please refer to the attached document if you would like to learn more about pruning.

Since there is a huge variation in layers' significance, the performance of a model may fluctuate dramatically depending on which filter is removed.

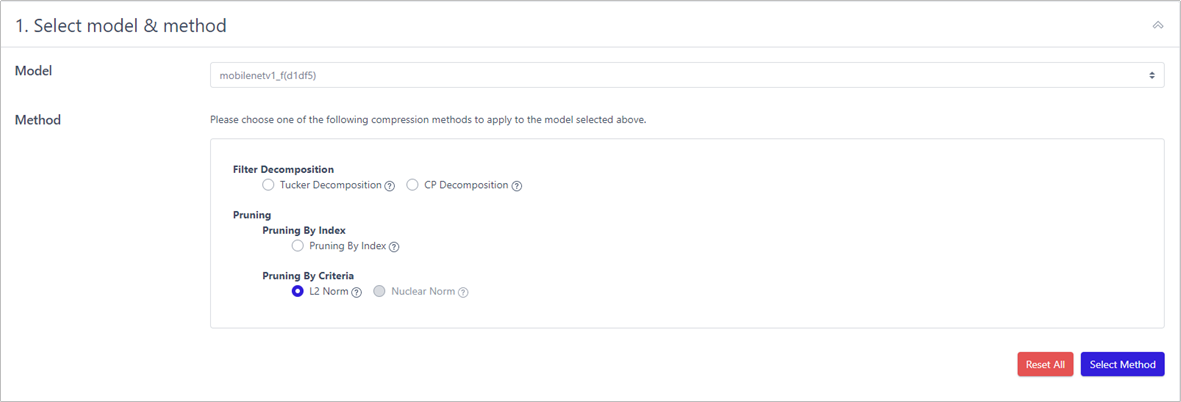

Just like FD, NPTK offers two options to implement pruning:

- Use our recommended values (automatically calculated)

- Use your own values (customized)

Recommendation function, based on SLAMP, presents the pruning ratio for each layer in a few seconds.

1) L2 Norm

Pruning ratio is how much filters to be pruned. As shown above, pruning_ratio = 0.5 means to remove 50% of the network.

2) Fine-tuning

After the compression, a model culminates in a significant performance deterioration. Thus, fine-tuning is an essential process to recover the original performance.

To fine-tune the model, run the train code we provided NetsPresso Compression Toolkit ModelZoo.

Unlike VGG19, we maintained the same configuration for all parameters but a learning rate; the learning rate was reduced to 1/10 of its original value (from 0.1 to 0.01)

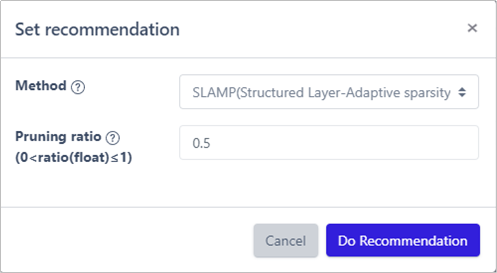

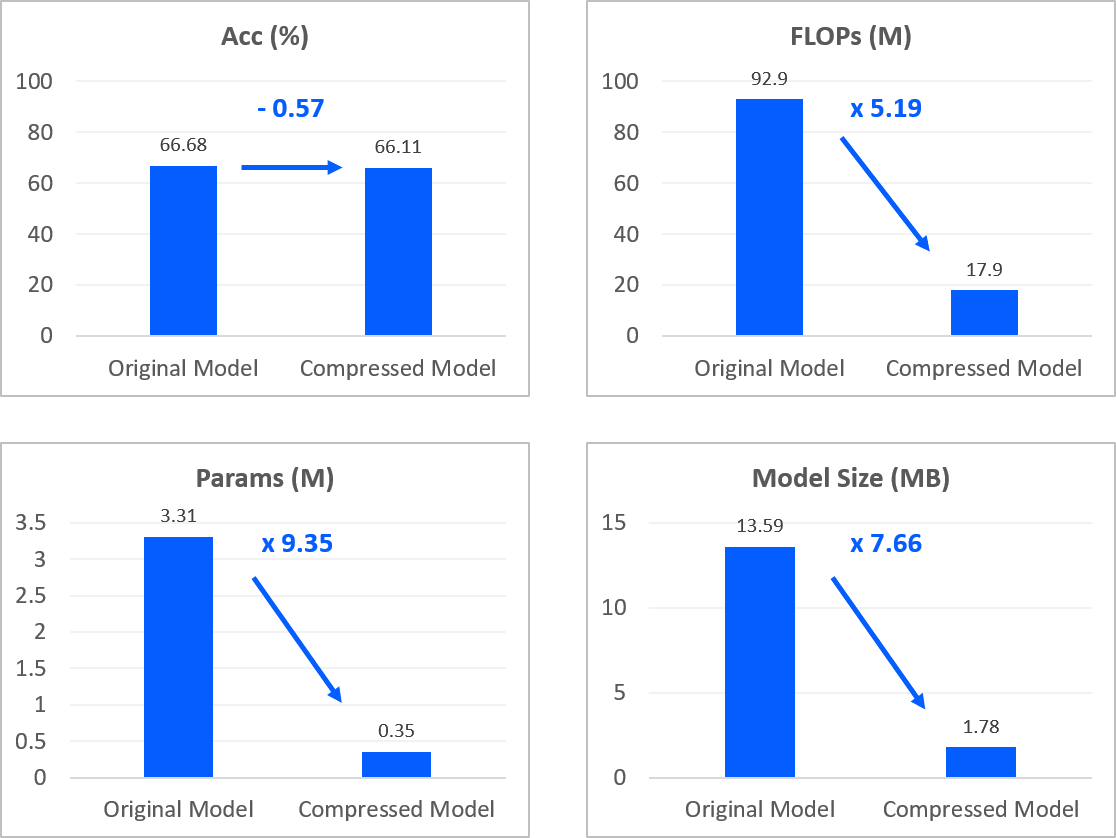

Result of Compression

| Name | Acc (%) | FLOPs (M) | Params (M) | Model Size (MB) |

|---|---|---|---|---|

| Original | 66.68 | 92.90 | 3.31 | 13.59 |

| Pruning | 66.44 (-0.24) | 54.99 (1.69x) | 1.59 (2.09x) | 6.79 (2.00x) |

| Pruning + FD | 66.32 (-0.36) | 26.09 (3.56x) | 0.53 (6.24x) | 2.52 (5.4x) |

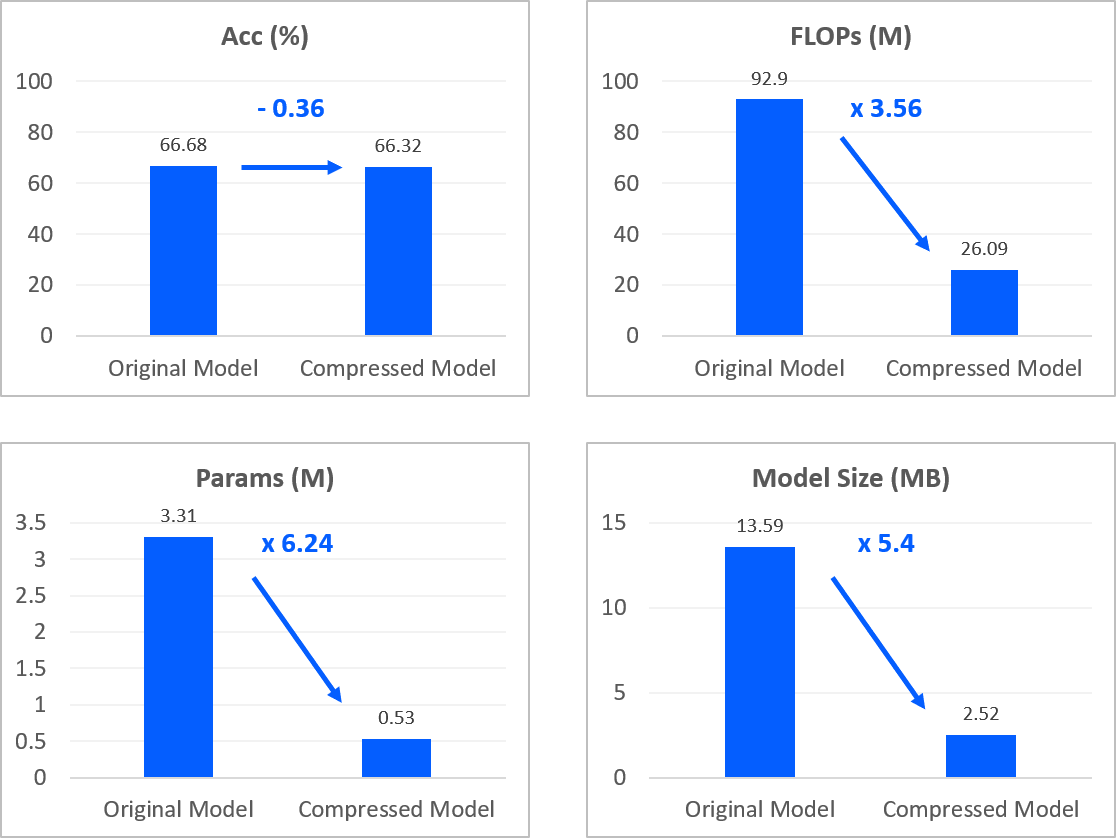

Search-Based Compression

To achieve the best, we utilized our own unique in-house search algorithm to find better compression parameters (rank & pruning ratio).

Unfortunately, the algorithm is only for internal usage and not provided in this trial version. If you are interested in our solution, please reach out to us.

The figure below illustrates our procedure.

The compression parameters obtained from our search algorithm are listed below. Using the parameters, you are able to create a model with the same performance.

Why is there a difference in performance between mine and Nota's?

Due to stochastic causes, fine-tuning may lead to a minor variance in accuracy.

Stage 1. Pruning

1) Pruning Compression Parameter

# layer name : Pruning Ratio

'conv1' : 0.6

'layers.0.conv2' : 0.2

'layers.1.conv2' : 0.2

'layers.2.conv2' : 0.4

'layers.3.conv2' : 0.4

'layers.4.conv2' : 0.6

'layers.5.conv2' : 0.6

'layers.6.conv2' : 0.7

'layers.7.conv2' : 0.7

'layers.8.conv2' : 0.7

'layers.9.conv2' : 0.6

'layers.10.conv2' : 0.6

'layers.11.conv2' : 0.8

'layers.12.conv2' : 0.3

Stage 2. Filter Decomposition

1) Filter Decomposition Compression Parameter

# layer name : [In Rank, Out Rank]

'layers.6.conv2' : [32, 29]

'layers.7.conv2' : [31, 31]

'layers.8.conv2' : [31, 31]

'layers.9.conv2' : [32, 34]

'layers.10.conv2' : [40, 40]

'layers.11.conv2' : [41, 41]

'layers.12.conv2' : [89, 114]

Result of Compression

| Name | Acc (%) | FLOPs (M) | Params (M) | Model Size (MB) |

|---|---|---|---|---|

| Original | 66.68 | 92.90 | 3.31 | 13.59 |

| Pruning | 66.35 (-0.33) | 21.20 (4.38x) | 0.50 (6.6x) | 2.31 (5.88x) |

| Pruning + FD | 66.11 (-0.57) | 17.90 (5.19x) | 0.35 (9.35x) | 1.78 (7.66x) |

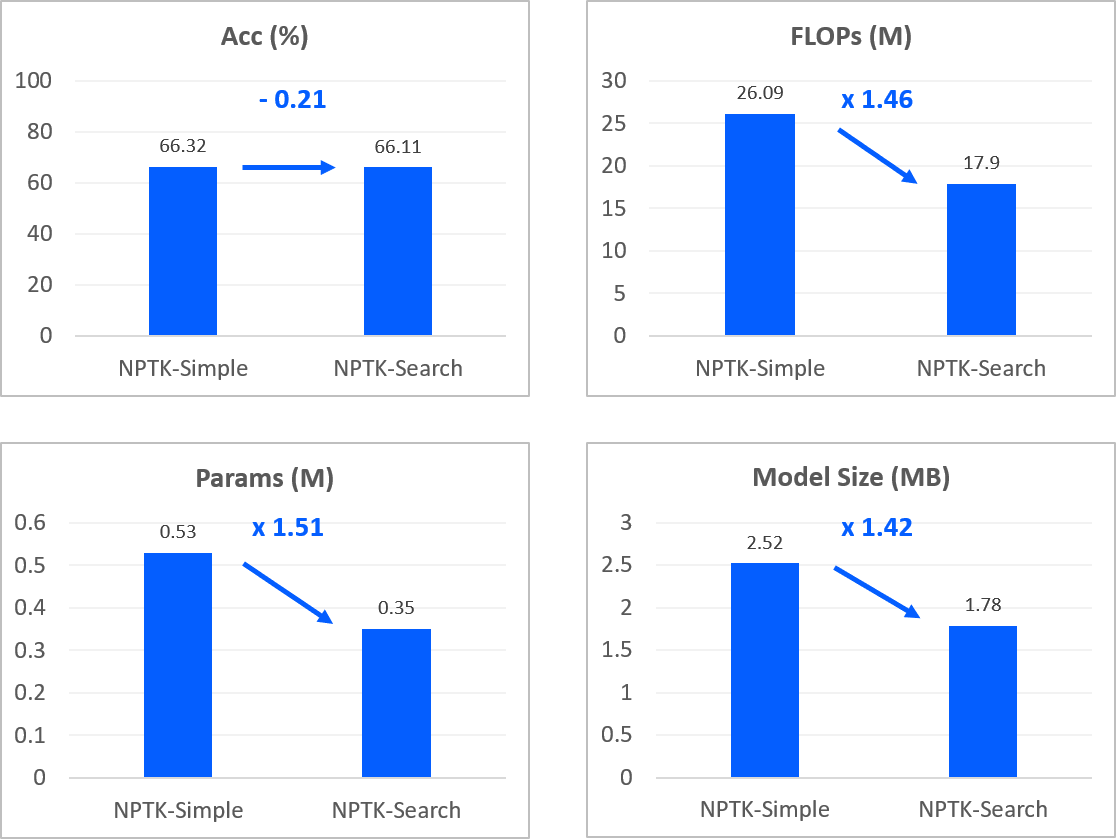

NPTK-Simple vs NPTK-Search

The results from Nota-Simple and Nota-Search

Summary

We have walked you through how we could compress a CNN model with NetsPresso Compression Toolkit (NPTK). You could see how NPTK reduced FLOPs, parameters, and a model size while maintaining the accuracy of an original model.

There are more blogs coming - object detection, super resolution. To stay up to date, sign up or subscribe at netspresso.ai.

Updated almost 2 years ago