Step 3: Convert model (beta)

Convert the model format according to the target device.

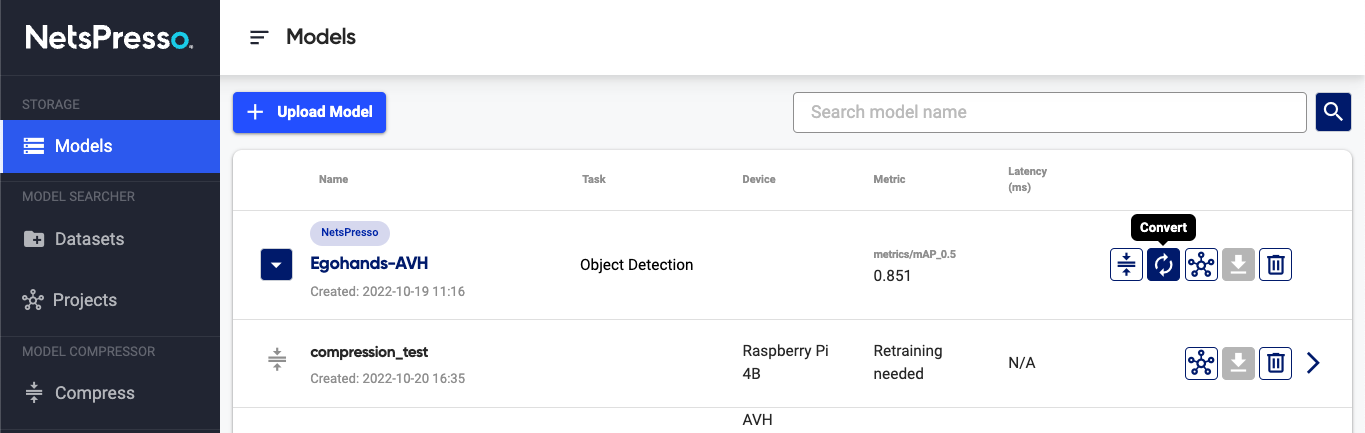

1. Go to Convert page

Click the Convert button on Models page.

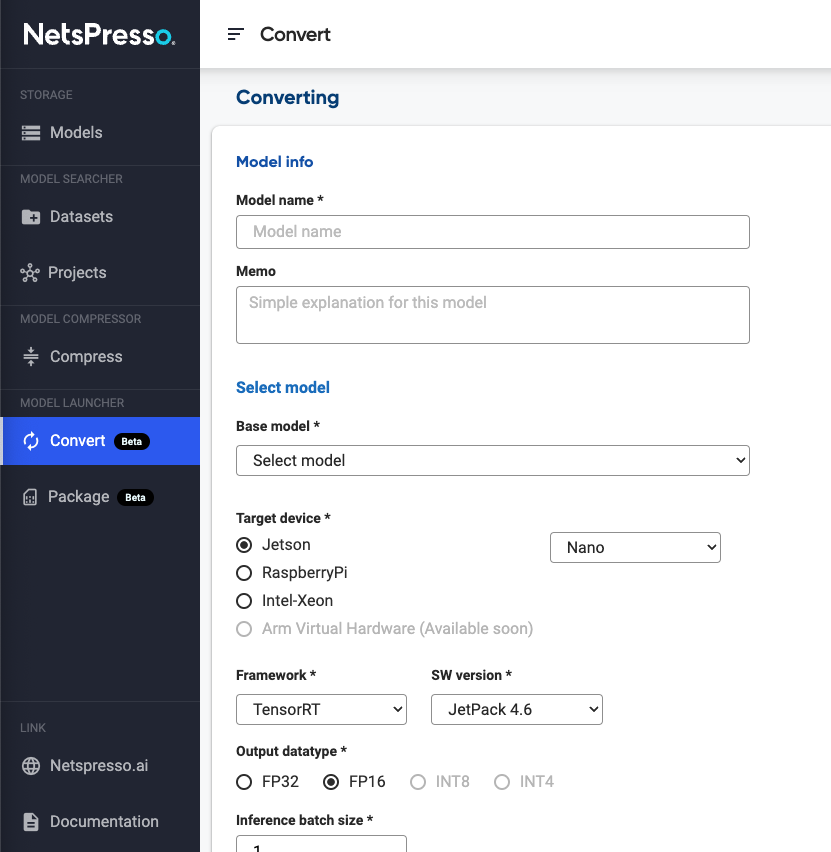

2. Covert model

Enter the name and memo for the converted model. Select a base model to be converted and the target hardware to benchmark the model.

Depending on the framework of the base model, the options available for converting are different.

- Models built with Model Searcher → TensorRT, TensorFlow Lite, OpenVINO

- Custom models

- ONNX → TensorRT, TensorFlow Lite, OpenVINO

Click the Start converting button to convert the model. (Converting for the NVIDIA Jetson family (TensorRT) may take up to 1 hour.)

3. Check the converting result

Converted model will be displayed on the Models page with performance benchmarks on the selected target hardware.

Updated 15 days ago